High maintenance overhead: We needed to maintain HFileService and DynamoDB at the same time.Improve read performance under spiky write traffic.Scale-out challenge: It was cumbersome to manually edit partition mappings inside Zookeeper with increasing data growth, or to horizontally scale the system for increasing traffic by adding additional nodes.However, there were opportunities for improvement: In Stage 3, we built a system that supported both read and write on real-time and batch-update data with timestamp-based conflict resolution. Stage 3 (2018): Scalable and low latency key-value storage engine (Mussel) Zookeeper was used to coordinate write availability of dynamic tables, snapshots being marked ready for read, and dropping of stale tables.

To minimize online merge operations, Nebula also had scheduled spark jobs that ran daily and merged snapshots of DynamoDB data with the static snapshot of HFileService. For read requests, data would be read from both a list of dynamic tables and the static snapshot in HFileService, and the result merged based on timestamp. Nebula introduced timestamp based versioning as a version control mechanism. It internally used DynamoDB to store real-time data and S3/HFile to store batch-update data. Therefore, Nebula was built to support both batch-update and real-time data in a single system. As Airbnb grew, there was an increased need to have low latency access to real-time data. For example, it didn’t support point, low-latency writes, and any update to the stored data had to go through the daily bulk load job. While we built a multi-tenant key-value store that supported efficient bulk load and low latency read, it had its drawbacks. Stage 2 (10/2015): Store both real-time and derived data (Nebula) Then queried Zookeeper to get a list of servers that had those shards and sent the request to one of them Clients determined the correct shard their requests should go to by calculating the hash of the primary key and modulo with the total number of shards. Different data sources were partitioned by primary key.Each server downloaded the data of their own partitions to local disk and removed the old versions of data A daily Hadoop job transformed offline data to HFile format and uploaded it to S3.We configured the number of servers mapped to a specific shard by manually changing the mapping in Zookeeper The number of shards was fixed (equivalent to the number of Hadoop reducers in the bulk load jobs) and the mapping of servers to shards stored in Zookeeper.Servers were sharded and replicated to address scalability and reliability issues.So we built HFileService, which internally used HFile (the building block of Hadoop HBase, which is based on Google’s SSTable): MySQL doesn’t support bulk loading, Hbase’s massive bulk loading (distcp) is not optimal and reliable, RocksDB had no built-in horizontal sharding, and we didn’t have enough C++ expertise to build a bulk load pipeline to support RocksDB file format. Multi-tenant storage service that can be used by multiple customersĪlso, none of the existing solutions were able to meet these requirements.Efficient bulk load (batch generation and uploading).

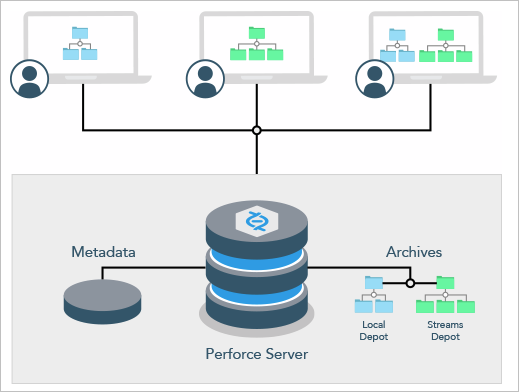

Stage 1 (01/2015): Unified read-only key-value store (HFileService)īefore 2015, there was no unified key-value store solution inside Airbnb that met four key requirements: This evolution can be summarized into three stages: Over the past few years, Airbnb has evolved and enhanced our support for serving derived data, moving from teams rolling out custom solutions to a multi-tenant storage platform called Mussel. In this post, we will talk about how we leveraged a number of open source technologies, including HRegion, Helix, Spark, Zookeeper,and Kafka to build a scalable and low latency key-value store for hundreds of Airbnb product and platform use cases. For example, the user profiler service stores and accesses real-time and historical user activities on Airbnb to deliver a more personalized experience. These services require a high quality derived data storage system, with strong reliability, availability, scalability, and latency guarantees for serving online traffic. Within Airbnb, many online services need access to derived data, which is data computed with large scale data processing engines like Spark or streaming events like Kafka and stored offline.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed